Posts Tagged ‘Website’

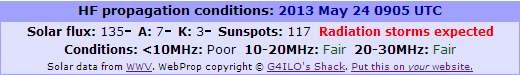

WebProp hiatus

WebProp hiatus

Some time on the afternoon of 21 May WebProp stopped updating. The first person to notice it (actually the only person to notice it) was Mirek, OK1DUB, who sent me an email.

|

| This is a screenshot not a live instance of the program |

I SFTP’d into the web server to check and sure enough the files containing the propagation information extracted from the WWV 3-hourly bulletins had not been updated. They were updated when I ran the script manually so my script was OK. The likely explanation was that cron, the Linux job scheduler, had stopped running. I filed a ticket with Hawk Host‘s support department.

They claimed that cron was still running, though the evidence of my own eyes showed that it wasn’t. It took me a while to convince them that there really was a problem but we got there in the end. This morning when I logged on to my computer the latest propagation information was being displayed again. Hopefully my cron jobs will now stay running.

Good or Evuln?

Good or Evuln?

A few weeks ago one of our websites was hacked. We didn’t notice for almost a week due to browsers caching the pages. The consequence of the hack was that every page accessed returned a 404 error meaning that the page was not found. During that week Google’s spiders visited with the result that almost the entire site was lost from the search engine.

I discovered the hack just in time to be able to restore from my web host’s oldest backup. It was a real hassle as well as a stressful time and I wanted to find a way to alert me more quickly if it happened again.

My first thought was to use ChangeDetection.com, the site I use to alert me when a change occurs to the IBP Beacon Status page. That was no good as both sites contain dynamic content that changes frequently.

A couple of days ago I was visiting some ham sites and I came across one with a badge stating that the site was scanned and malware free. I clicked on the badge and it took me to evuln.com, a site containing a lot of information about how to secure a website and offering tools to detect an attack.

Tools include a malware scanner that will check your site to see if it contains something bad. You can have this check run daily for free if you display a badge on the site. This appeared to be just what I was looking for, so I registered with the site and added the badge to both G4ILO’s Shack and ham-directory.com.

Evuln.com also offers a service to clean and fix websites. This is something I might well have used a few weeks ago if my web hosts’s support hadn’t been helpful in assisting me to identify the hacked files. But the cynic in me rang an alarm bell. It would be in evuln.com’s interest to claim that my site was hacked and then offer to clean it up for a fee. What a good scam! In fact the owners of a couple of sites that had been told they had been hacked thought it was a scam and that their site had been hacked by evuln.com!

So is evuln.com good or evil? I did a lot of digging. I think that if it was a scam I would have found a lot more evidence of people who had been scammed. Evuln.com has been running for several years and contains good information. The owner replies quickly and promptly to enquiries. There is an address and contact information on the site. I believe that evuln.com is a genuine attempt to provide a useful service.

I have since found other similar services such as ScanMyServer which do not offer a site cleanup service. Come to your own conclusion.

Is it just me?

Is it just me?

For the last few weeks, when I browse the pages in G4ILO’s Shack using Google Chrome browser, they start displaying in a plain text sort of mode.

|

| How pages look in Google Chrome |

|

| How that page is supposed to look |

I think what’s happening is the CSS style sheet isn’t being loaded. So the pages are appearing without any formatting. But why? This doesn’t happen in Firefox. It didn’t used to happen in Chrome. And it doesn’t happen in Chrome after I clear the cache. After that the next few pages display OK until it eventually happens again.

Is it just me, or is this happening to everyone who visits my site using Google Chrome?

Hacked off

Hacked off

This is not much to do with radio. But I know that many of you have your own websites and will probably find this of interest.

A couple of days ago I discovered that one of my websites had been hacked. Not G4ILO’s Shack, but the other one which still continues to earn us a little bit despite receiving only the barest maintenence in the last two years.

I opened one of the pages and instead of the expected content a server error message appeared. My first thought was that the hosting company had changed some setting so I fired off an urgent support ticket. They responded saying that some of my files had been “compromised”. Sure enough when I looked at one of the files there was some code I didn’t recognize. This code referred to a file that had been added which was zero length, and that was causing a 500 server error. I deleted the file and every access now caused a 404 “file not found” error. Eventually I found that the .htaccess file had been hacked and some code added which was being executed for every single file access.

The timestamp showed that the .htaccess had been modified a week ago on 19th March. Because of the web browser caching we had not noticed the error messages any earlier. Google had visited the site in that time however, and had received a server error for every page it tried to access. So now the site had dropped out of Google. Thanks a lot, hackers.

Further investigation revealed that the hackers had modified almost every .php file on the server. They had inserted some code at the beginning of every file, apparently meant to disable error reporting. They had inserted some other code into one .php file that was included in every page. However, something in what they had done had the effect of disabling PHP processing with the result that the PHP code was sent to the browser instead of being executed.

To cut a long story short, after trying to repair the hacked files individually, I decided to restore the site from the oldest backup the hosting company held. I had a little bit of luck: the oldest backup was taken on 19th March, the day of the attack, but it had run before the attack occurred so I was able to restore the site with every file as it was originally. A day later and that backup would have gone and I would have been unable to restore the site without a lot of manual work. But the damage had been done as far as Google was concerned.

If you are expecting a lesson to be learned as a result of this story, I don’t have one, other than if you want a quiet life stick to blogging, don’t try to run your own website. If you do, visit your site every day and check for changes.

I have no idea how the hacker managed to gain access to the files on my shared web server. If they did it once they could do it again. I don’t believe that my passwords were compromised as they are randomly-generated, but I changed them anyway. Altogether this episode lasted for several stressful hours – time that I would much rather have spent trying out the latest WSJT-X program.

RSGB Centenary

RSGB Centenary

It’s fashionable for British hams to knock the RSGB. But I have never been much influenced by fashion.

The RSGB will be 100 years old in 2013. No doubt there will be all sorts of celebrations, a special event station and so on.

To mark the occasion I will display the RSGB centenary badge on my blog and my website until the end of 2013. I think it would be a good idea if more RSGB members who have blogs and web pages did the same.

Here is a snippet of code to make it easy for you to add this to your website:

It’s an image not text so you can’t cut and paste it – Blogger kept interpreting it as HTML code so this was the only way I could find to include it!

Beacon monitor back online

Beacon monitor back online

For the time being I have put my IBP Beacon Monitor page back online. As I mentioned in a previous post, this is really something that needs to run 24/7 to be of most value. I note that I am not the only beacon monitor who states that monitoring runs only when not otherwise using the radio and antenna. So perhaps I will manage to keep it going for a bit longer than previously.

I updated the list of other beacon monitoring stations at the bottom of the page, deleting those that did not appear to be active. The official NCDXF/IARU International Beacon Project beacon monitors page has a lot of dead links on it.

It’s interesting to take a look and see what propagation is like in other parts of the world. It’s a pity there aren’t more beacon monitors in the USA. And is propagation really that good in VK-land?

I like the additions F4CWH has made to his beacon monitor pages. I wonder if he would share with me how he has done it? I would particularly like to indicate which beacons are off the air. Three of them. including the one on the east coast of the USA (New York) are not operating at the moment.

KComm is 2.0!

KComm is 2.0!

I have taken advantage of the poor propagation conditions – the WSPR application waterfall has been blank all day and just two stations have spotted my 10m beacons, while APRS on 30m is only just beginning to receive any other stations – to make available a new version of my logging program for Elecraft transceivers, KComm, which is now version 2.0.

The main difference in the new version is that the Elecraft KX3 is supported (though it could be used in older versions by pretending it is a K3.) I have also added an option for specifying alternative URLs such as QRZCQ.com for looking-up callsigns, so you can now say goodbye to logging in to QRZ.com every five minutes if you want to.

The other changes are all minor bug fixes and small improvements that probably no-one will notice.

My regrets to Linux users but I no longer have a Linux system available so I cannot provide a Linux archive of the new version. I really need a Linux user to install Lazarus and compile the source code then send me a new tar.gz file to put on the web site.